What you should know about visionOS volumes before using them in an app

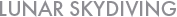

In visionOS, content can be displayed in windows, volumes, and spaces.

Windows and spaces generally work as advertised, but volumes have several limitations you should be aware of before designing your app around them.

16 things you should know

If you intend to support visionOS 1.x, be aware that before visionOS 2.0, volumes could not be resized by the user or in code.

Starting with visionOS 2.0, volumes are now resizable by default, and you’ll need to add frame(width:height:) and frame(depth:) view modifiers if you don’t want your volume to be resizable.

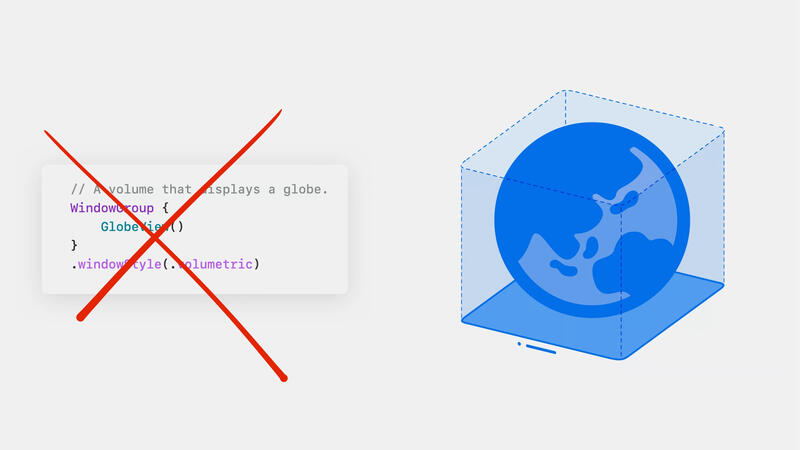

Volumes cannot be created with a size larger than a 2 meter cube. If you attempt to create a volume larger than that, and place an object of the same size inside it, like the 2.1 meter wide sphere below, it will be clipped to the bounds of the volume. It’s possible this size limit may change in future visionOS releases.

In visionOS 1.0, the 2 meter maximum size limit assumes the user’s Window Zoom preference is set to Large, which is the default setting. In the Settings app, users can navigate to Appearance > Display > Appearance > Window Zoom to change it. The size limit has a different value for each Window Zoom setting. For example, when Window Zoom is set to Small, as shown below, the size limit is about 1.47 meters in visionOS 1.0. In visionOS 1.1 and later, Window Zoom no longer affects the size of volumes.

In visionOS 1.x, your volume may change size depending on the user’s Window Zoom setting, even if it’s smaller than the size limits described earlier.

To address this issue, it’s critical that you use GeometryReader3D in conjunction with your volume’s RealityView to scale the contents of your volume to compensate for the size change.

Otherwise, the contents of your volume may appear clipped.

This requirement is undocumented, and Apple’s sample code does not demonstrate how to do it.

This was the case for all uses of volumes in visionOS 1.0.

In visionOS 1.1, the situation has improved somewhat, but there are still a few edge cases you must be aware of if you don’t want your volumes to appear broken.

See my blog post about this issue for more details.

You may also want to use this code to handle the new behavior that in visionOS 2.0, volumes are now resizable by default, which will cause clipping unless handled correctly.

Apps can’t set the initial position of a volume. If your volume contains a scene like a diorama that is best viewed from the perspective of the user looking down into the scene from a steep angle, there’s no way for the app to present the volume in a position that will facilitate this. The volume will be most likely be positioned so that it is viewed from the side, as if it was a window. The user must manually move your volume down each time it is presented.

Like windows, volumes are automatically placed directly in front of the user when they first appear. If the user moves the volume to a preferred position, for example, by placing a virtual teddy bear on a real world table, there’s no way for the app to restore the teddy bear to this position the next time the app launches. Furthermore, if the user long-presses the Digital Crown, the volume will be repositioned in front of the user along with other windows.

In visionOS 1.0, the initial position of a large volume may extend behind the camera. The user may find themselves inside the volume. This appears to be fixed in visionOS 1.1, but if your app needs to be compatible with visionOS 1.0, you should be aware of this issue.

The volume’s window bar is always placed at the bottom edge of the volume. There’s no way to place 3D content at a level below the window bar. If your scene has the form of a diorama with subterranean roots and pipes dangling below it, and placing the window bar at ground level would provide the best user experience, there’s no way to do that.

In the shared space, the position of the camera relative to a volume’s coordinate space can’t be determined by your app. If you place an animated character in a volume, there’s no way to make the character turn to face the user and make eye contact. However, Reality Composer Pro shader graphs are able to query the camera position, so if you want to implement billboard polygons, you can do it that way, although this is rather involved.

Hand tracking doesn’t work with volumes. Custom gestures cannot be implemented in a volume. However, the standard system gestures are available and may be sufficient for your use case.

In the shared space, you can’t use ray casting to perform picking in the context of a volume because there’s no way to determine the camera position. However, in an immersive space, where the camera position can be polled, starting in visionOS 1.1, it’s now possible to convert between the coordinate spaces of an immersive space and a volume using RealityViewContent/convert(transform:from:to:) and the new immersiveSpace named coordinate space. This can facilitate picking based on ray casting.

An app that only has a volume can’t prompt the user to rate the app.

The undocumented requestReview SwiftUI environment action doesn’t work within the context of a volume.

You’ll see the error message “Presentations are not permitted within volumetric window scenes” and your app will terminate.

A workaround is to first open a window and then present the app review dialog from that window.

You may experience issues if you attempt to present other system views as well.

Watch out for subtle bugs in SwiftUI controls in attachments added to volumes.

For example, in some visionOS versions, picker images are displayed in a dimmed state. (Actually, upon further investigation just now, this turns out to be broken in visionOS 1.1 for regular windows as well, not just for volumes. But in an earlier visionOS 1.0 beta, it was broken only for volumes and not windows.)

Or, images specified as symbols may look fine, but picker images defined in asset catalogs will appear extremely dim.

I’ve reported fundamental bugs with RealityView attachments frequently enough that I get the impression they are not well-tested.

Do not blindly assume they will work reliably in all cases.

Unlike SwiftUI controls in windows, SwiftUI controls in volumes don’t take precedence over the Control Center indicator, which may affect usability when the positions of these elements coincide in the user’s field of view.

None of the first party apps Apple ships with visionOS use volumes. Consequently, it may be more likely that when new visionOS versions are released, you’ll experience bugs that Apple engineers didn’t notice earlier in the release cycle. If you use a volume in your app, it’s particularly critical that you test your app throughly against each new visionOS beta release and report any new bugs you find immediately.

If your app supports older visionOS versions, it’s important to test your code thoroughly. As described above, the behavior of volumes has changed significantly in visionOS 1.0, 1.1, and 2.0. Be aware of the possibility of future breaking changes.

If necessary, use an immersive space instead

If, after considering the limitations described above, you decide not to use volumes in your app, you may choose to display your 3D content in an immersive space instead. By doing so, you’ll avoid many of the restrictions described above.

However, if you use an immersive space, you will no longer to be able to display your app’s 3D content in the shared space alongside other apps. If that’s too limiting, you should continue to use volumes, but attempt to redesign your app around their limitations.

Also, if you do decide to use an immersive space, your 3D content will no longer include the window bar that volumes provide, and you’ll have to implement equivalent functionality yourself.

Volumes are fine if your needs are minimal

The risk of using volumes in your app is probably minimal if your volume is only 1 meter in size and contains a static object and you use the code in my other blog post to create your volume instead of Apple’s sample code and your volume does not require feature-rich SwiftUI attachments or other interaction.

If you only want to use a volume to display a small inert teddy bear, you’re probably fine.